Introduction

OpsRamp Copilot supports a Bring Your Own LLM model, allowing you to connect Copilot to your own LLM credentials for full control over model choice, security, and billing.

This enables customers to use their own enterprise-managed LLMs (Google Vertex AI) while still benefiting from Copilot’s conversational and analytical capabilities.

Note

This release supports only Google Cloud Platform (GCP) Vertex AI models, including Claude Sonnet 4.6 (Anthropic), Gemini 2.5 Flash Lite, Gemini 2.5 Flash, and Gemini 2.5 Pro (Google). Support for additional LLM providers and model families will be added in future releases.Recommendation

It is recommended to use the Claude Sonnet 4.6 model for optimal performance.Default State

- Copilot is disabled by default until an administrator adds valid LLM credentials.

- Once credentials are added and saved, Copilot automatically becomes enabled and available to all authorized users.

Prerequisites

Before configuring Copilot with your own LLM, ensure the following:

Google Cloud Platform Requirements

- A valid GCP project with billing enabled

- Vertex AI API enabled in the project

- Appropriate IAM permissions to create/manage service accounts

Service Account Requirements

Create a service account and ensure it has the following minimum roles:

| Required Role | Purpose |

|---|---|

| Vertex AI User | Allows invoking LLM models |

| Service Account Token Creator | Enables key-based authentication |

| Storage Object Viewer | Needed for models referencing GCS-backed assets |

You must download the JSON key for this service account. This key is pasted into OpsRamp.

Supported LLM Providers

- Google Cloud Platform (GCP) – Vertex AI

- Supports both Google Gemini models and third-party models like Claude (Anthropic)

These models are typically used in the following tiers:

- Standard Tier: Commonly mapped to Flash Lite or Flash, suited for cost-effective, general-purpose workloads.

- Advanced Tier: Commonly mapped to Flash and Pro (or another higher-capability configuration) and designed for:

- Complex reasoning scenarios

- Use cases requiring large context windows

- Deep analysis across extensive infrastructure and observability data

To ensure Copilot can effectively support OpsRamp use cases, at least one configured model must meet the minimum requirements for advanced reasoning and large-context analysis.

Copilot Model Tiers: Standard vs Advanced

Copilot supports separate Standard and Advanced model tiers to ensure the right balance between performance, cost efficiency, and analytical depth. Each tier is optimized for different types of operational workflows and investigation scenarios.

Copilot automatically selects which tier to use for each request. Based on the complexity of the plan the agent builds (for example, whether it needs multi-step reasoning, broader context, or deeper correlation across alerts/tickets/metrics), Copilot will route the request to either the Standard or Advanced model tier.

How to Enable Copilot Using Your Own LLM

To activate Copilot:

Navigate to Setup → Account → Credentials.

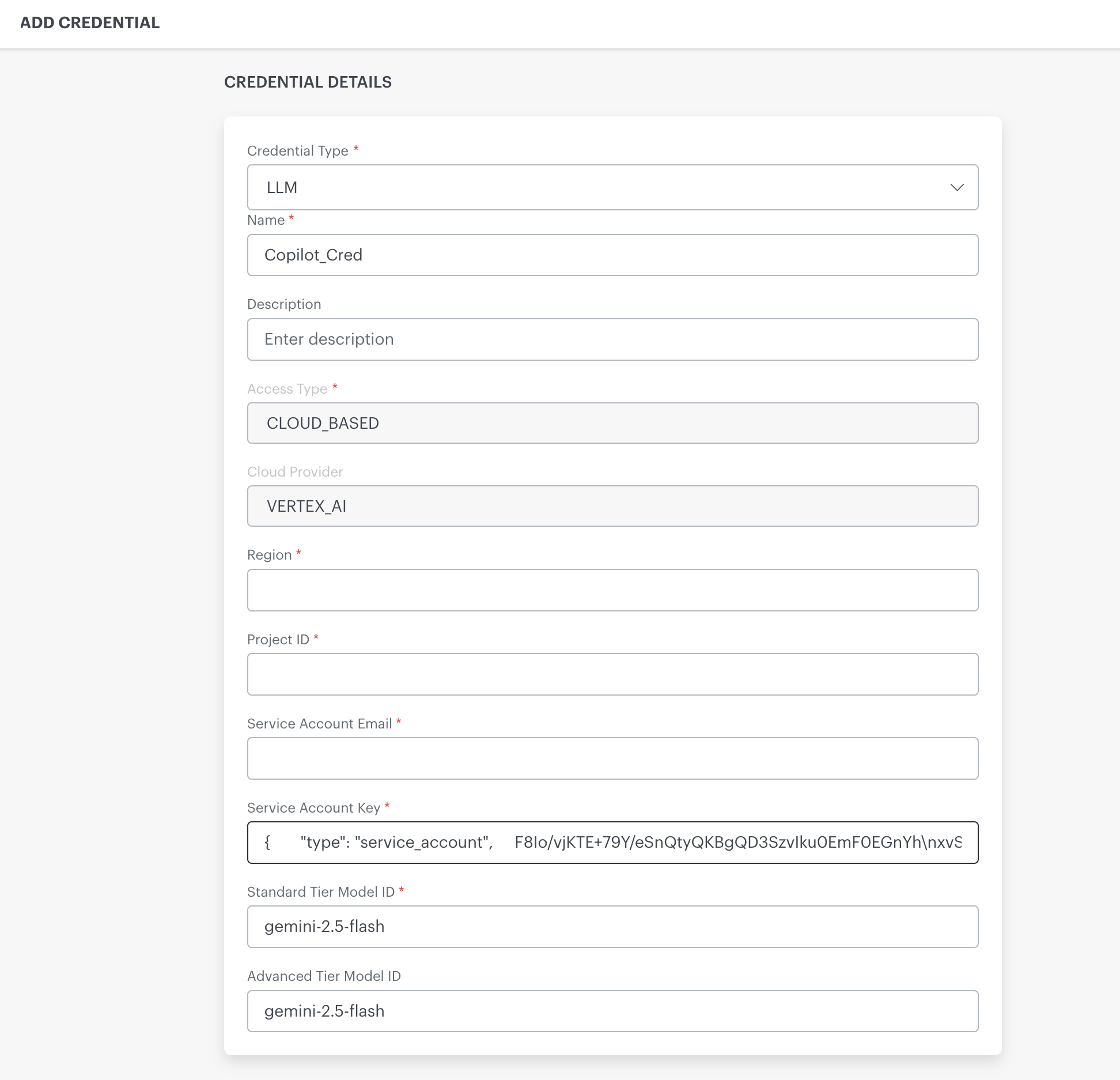

Click +ADD.The ADD CREDENTIAL page is displayed.

Fill all mandatory fields:

| Field Name | Required | Description | Example |

|---|---|---|---|

| Credential Type | Yes | Must be set to LLM to indicate this credential is for Copilot. Note: When you select Credential Type as LLM, the acceptance/consent box is displayed. This is a one-time activity. | LLM |

| Name | Yes | A name for this credential. Appears in the credentials list. | Copilot_Cred |

| Description | Optional | Optional notes about the credential. | "Copilot LLM credential for tenant" |

| Access Type | Yes | Specifies where the model is hosted | CLOUD_BASED |

| Cloud Provider | Yes | The cloud platform supplying the LLM. | VERTEX_AI |

| Region | Yes | GCP region where your Vertex AI model and service account are deployed. Must match your GCP setup. | us-central1 |

| Project ID | Yes | Your GCP project ID contains your AI setup. | |

| Service Account Email | Yes | Email for the service account with permissions to invoke LLM models through Vertex AI. | |

| Service Account Key | Yes | Full JSON key of the service account. Must be pasted exactly as exported from GCP. | { "type": "service_account", ... } |

| Standard Tier Model ID | Yes | Model ID for standard tier inference. | claude-sonnet-4-6 |

| Advanced Tier Model ID | Optional | Model ID for advanced tier workloads (if applicable). |

- Click Save

Once saved, Copilot will switch from disabled to enabled.

Completing the Setup

After saving your credentials:

- Copilot becomes enabled.

- All Copilot channels (Search, Command Center, PRC, Network (Beta)) start using this LLM.

- No additional activation, restart, or configuration is required.